Nate Silver’s The Signal and the Noise has much to recommend it. It is about prediction generally, rather than being focused specifically on baseball or politics where he built his reputation. He also notes challenges with statistical models, specifically the problem of overfitting.

The Problem Of Overfitting

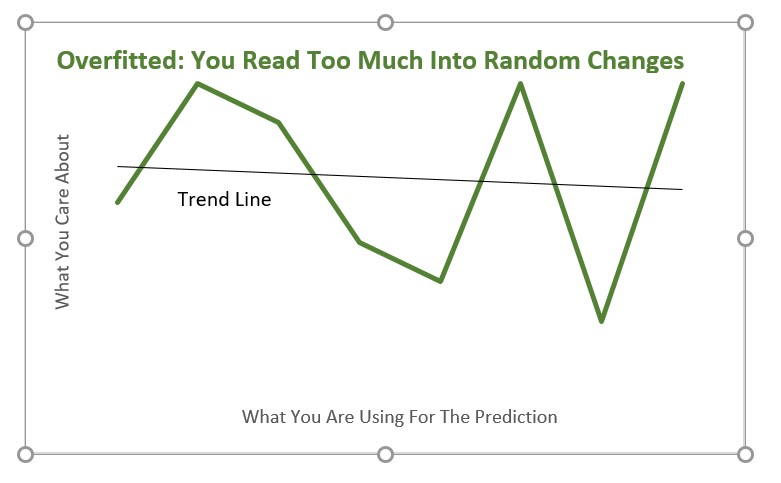

A problem Silver addresses is the overfitting of mathematical models. Overfitting is when we have a model that is great at explaining the data we have. Yet it is poor at predicting anything else. It is excellent at saying which companies did well last year. Still that is pretty useless because it can’t predict next year’s successes. We have “…an overly specific solution to a general problem.” (Silver 2012, page 163).

Overfitting represents a double whammy: it makes our model look better on paper but perform worse in the real world.

Silver, 2012, page 167

The Importance Of Theory

This often occurs because your model chases randomness in the data. You find something that correlates with something else but have no good reason to put the two together. As given enough data you will always be able to find numerous statistical relationships you need theory to make a connection plausible. By theory I don’t mean anything too grand. Academic papers need stronger theory than business models but even business models must pass a sniff test.

If you can’t think of any plausible way a variable could impact another variable you shouldn’t take the relationship seriously. This true even if the connection seems statistically strong.

A once-famous “leading indicator” of economic performance, for instance, was the winner of the Super Bowl…. [NFL versus AFL] … this indicator had correctly “predicted” the direction of the stock market in twenty-eight of thirty-one years. A standard test of statistical significance, if taken literally, would have implied that there was only about a 1-in-4,700,000 possibility that the relationship had emerged from chance alone.

Silver, 2012, Page 185

Big Data And Meaningless Overfitting

I totally agree with Silver’s point. In a world of Big Data there are any number of meaningless chance correlations to be found. So if anything we need theory more than ever to stop us believing that coincidental nonsense — marketing astrology — means something. I’ll leave Silver with the last word.

This kind of statement is becoming more common in the age of Big Data. Who needs theory when you have so much information? But this is categorically the wrong attitude to take toward forecasting, especially in a field like economics where the data is noisy. Statistical inferences are much stronger when backed up by theory or at least some deeper thinking about their root causes.

Silver, 2012, page 197

For more on the importance of theory see here.

Read: The Signal and the Noise, Nate Silver, The Penguin Press, New York, 2012

https://www.amazon.com/The-Signal-and-Noise-Nate-Silver-audiobook/dp/B009HL6444/