As algorithms play greater and greater roles in our lives a reasonable question is: “are they fair?” The answer is often; “no, not really”. To be clear that doesn’t necessarily mean algorithms are making the world worse. If things were unfair before (and they were) then just knowing that things are unfair now can’t tell you about the direction of change. That said getting rid of any unfairness (bias) is clearly a worthy goal. So what should we think about bias and algorithms?

Greater Use Of Algorithms

Jerome Williams and his colleagues in a Customer Needs and Solutions article discuss the implications of greater use of algorithms. They start by pointing out that algorithms can improve the world:

“The use of algorithms can be highly beneficial and efficient to make statistical decisions in settings where data are voluminous.”

Williams, Lopez, Shafto and Lee, (2019)

Bias And Algorithms

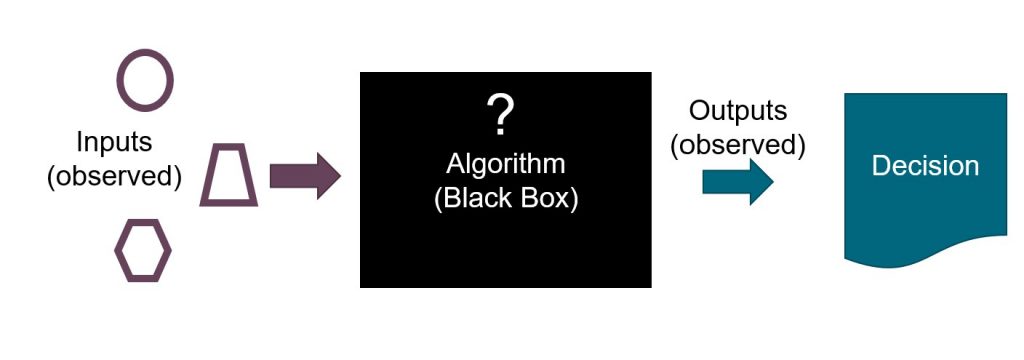

The authors do, however, rightly worry about the problem of bias in algorithms. A challenge is that algorithms can be pretty opaque. They tell us what to do; perhaps in banking who to give a loan to. That said, it isn’t always clear why some people were chosen and others not. Whenever that happens there is always a concern that bias has crept in.

The authors make the case that it is critical to try and address any such bias. They pick apart where bias can creep in. This can arise because of the data we use, in the algorithm we run on the data, or from the interaction between the data and the algorithm.

They conclude with ideas to improve things. A key solution they see is having a diverse workforce in the tech industry. That makes sense. Even if someone isn’t aiming to be unfair it is, at a minimum, harder to spot unfairness that doesn’t apply to you.

This topic is only going to get bigger in coming years. It is good to start thinking about it more deeply.

For more on AI and machine learning see here.

Read: Jerome D. Williams, David Lopez, Patrick Shafto and Kyungwon Lee (2019) Technological Workforce and Its Impact on Algorithmic Justice in Politics, Customer Needs and Solutions, December 2019, Volume 6, Issue 3–4, pp 84–91