Artificial Intelligence (AI) has thrown up all sorts of questions for business and society. What then of artificial intelligence and its challenges?

What To Do With It

One of the first problems was recognizing what to do with it. Nowadays we can see AI making considerable impacts on the world but that this would happen wasn’t always so obvious. Michael Haenlein and Andreas Kaplan in their (brief) review of AI give the great anecdote about government funding on AI being seen as a bit of a waste.

“…in 1973, the U.S. congress started to strongly criticize the high spending on AI research. In the same year, the British Mathematician James Lighthill published a report commissioned by the British Science Research Council in which he questioned the optimistic outlook given by AI researchers. Lighthill stated that machines would only ever reach the level of an ‘experienced amateur’ in games such as chess and that common-sense reasoning would always be beyond their abilities.”

Haenlein and Kaplan, 2019, page 7)

This was a remarkably bad prediction – they could have done with an AI to help with the prediction. To be fair, it took quite a while for AI to reach the levels it has now. (1973 was a long time ago, in that year the UK joined the European Community. Looking back writing in 2020 it is quite possible that it isn’t just AI getting better that is changing the balance. Human being’s ability for common-sense reasoning appears to have declined considerably in the last 47 years).

Addressing Artificial Intelligence And Its Challenges

Haenlein and Kaplan note challenges for AI and some ways to address these. Many decision support systems can be racially biased. This may be because they are largely trained on pictures of Caucasians and so recognize them better. The rather obvious solution here being to change the way AIs are trained to recognize the world. The authors suggest developing “commonly accepted requirements regarding the training and testing of AI algorithms” (Haenlein and Kaplan, 2019, page 11).

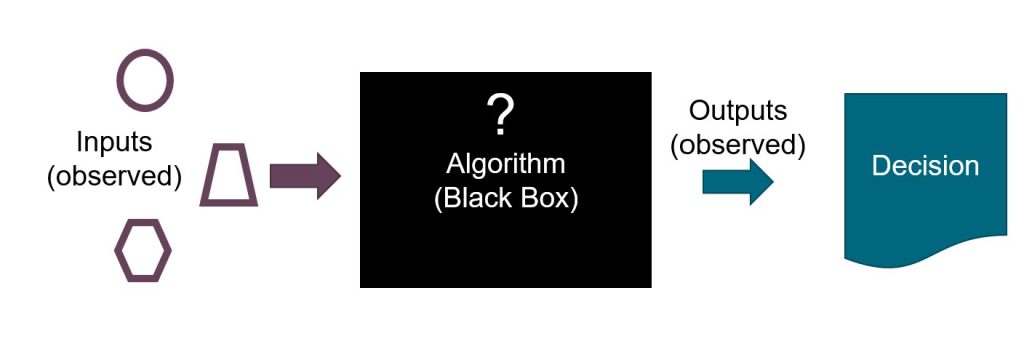

The Black Box Problem

They point to the black box problem. We know what is input to an AI, and what is output, but what happens in the middle can be a bit of a mystery. This is great for firms wanting to maintain their secret sauce but presents significant public policy challenges. We need a better way to regulate companies and states and their use of AI. This will not be easy. Nor will it ever be perfect. Still, I think we can do a better job if we try a bit harder than we currently are.

For more on AI and machine learning see here, here, and here.

Read: Michael Haenlein and Andreas Kaplan (2019) A Brief History of Artificial Intelligence: On the Past, Present and Future of Artificial Intelligence, California Management Review 61 (4), pages 5-14